LinkedIn is Not Breaking the Law. That's the Problem.

LinkedIn is not breaking the law. That’s the problem.

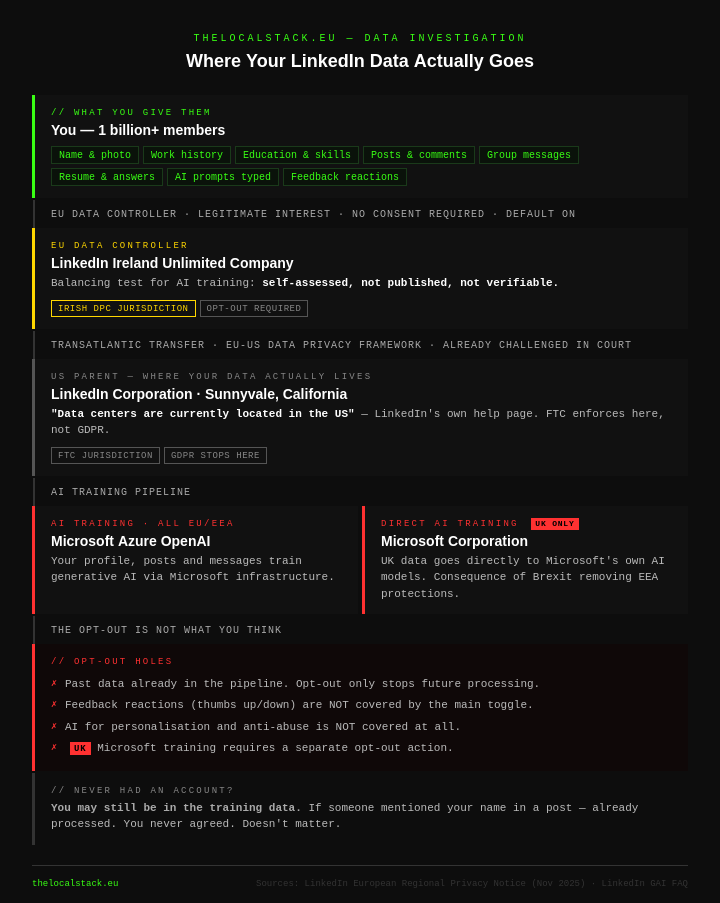

Thanks to a reader who wrote an email, I found out that LinkedIn has enabled by default the option for “Data for Generative AI Improvement”. To be clear, I only found out thanks to a reader. I was not notified by LinkedIn. They updated their privacy notice, but everything gets buried on lengthy webpages that will never be seen by anyone. Except me.

Where is it and what happens?

Settings > Data and privacy > Data for Generative AI Improvement. Already turned on. Who knows when it was added. I clicked the small “learn more” link, which takes you to a help page. The first thing that stood out was this:

“The Data for Generative AI Improvement member setting is set to ‘on’ by default, unless you opt out by turning it ‘off.’ Turning the setting off means that we (LinkedIn and our affiliates) won’t use the data and content you provided to LinkedIn to train models that generate content going forward. Opting out does not affect training that has already taken place.”

So this is the illusion of opting out. Everything that happened before got packaged and sent to a database, ready to be devoured by an AI model. Your comments, your thoughts, your public professional figure — all of it became data, ready to be used, extracted, analyzed, compressed into a huge wall of words.

If you read the help page you find a sharp piece of irony. LinkedIn built a platform on the promise of authentic professional identity. Then they used that authenticity as training data. The trust that makes LinkedIn valuable is the same trust they’re monetizing.

This is the split: LinkedIn uses generative AI models to keep the platform safe and compliant with their community policies. They also use generative AI models for content creation — for example, the AI writing assistant that drafts messages for you. Two birds, one stone. Your data feeds both.

Even when you’re out, you’re not

“Even if you have opted out… if you choose to provide feedback to us, we may still use information you include in your feedback to train our content generating AI models. Please be thoughtful about the information that you share in your feedback.”

I think sometimes we live in a reality show. It’s so funny that it starts to become sad. You opted out. You click thumbs down on an AI suggestion. That feedback — your reaction, your correction, your words — still trains the model. The opt-out has a hole in it, and LinkedIn tells you to be careful about what you put in the hole.

The data they use — explicitly listed

They list exactly what goes into AI training:

- Your full profile — name, photo, work history, education, location, skills, certifications, patents, publications

- Everything you’ve ever typed into an AI feature — prompts, search text, questions

- Your resume and screening question answers

- Group messages

- Every post, article, poll response, and comment you’ve ever made

- Your feedback reactions

This is not abstract. This is a complete picture of your professional identity, your career history, your thoughts, your private group conversations — all in a training dataset.

How politics affects your privacy

UK users have it even worse. LinkedIn shares their data directly with Microsoft for Microsoft’s own AI training — not just LinkedIn’s products. Posts, comments, articles, videos, transcripts. This is a direct consequence of Brexit removing EEA protections. It’s buried on page 36. That’s just one of the smallest consequences of leaving the EU. I’m not doing political propaganda — just stating the facts. Leaving a framework as complex as the EU has real consequences. Brexit made UK users more vulnerable to surveillance capitalism and data hoarding.

How the legal basis is being exploited

LinkedIn chose legitimate interest as the legal basis for training AI models on your data. This is a deliberate legal choice that bypasses the need for your consent. Under GDPR, legitimate interest requires a genuine balancing test — your rights must not outweigh their commercial interests. LinkedIn conducted that test themselves and unsurprisingly concluded in their own favor. No independent assessment. No transparency about the methodology. The test itself is not published. You cannot verify it. You cannot challenge it. It exists only as words in a legal document.

The transfer

Your data leaves Ireland, crosses the Atlantic under a legal framework that was already struck down once, lands on US servers, and falls under FTC jurisdiction. GDPR is where your data starts. It’s not where it ends up. LinkedIn explicitly states it is subject to the investigatory and enforcement powers of the US Federal Trade Commission. The FTC is a US agency. Your data protection, once it reaches US servers, depends on US enforcement. Not the Irish DPC. Not GDPR.

What you can do

Step one — turn off the setting: Settings > Data and privacy > Data for Generative AI Improvement > Off.

Step two — submit the Data Processing Objection Form for feedback data and other legitimate interest processing: linkedin.com/help/linkedin/ask/TS-DPRO

Step three — if you’re in the EU, file a complaint with the Irish DPC at dataprotection.ie. Free, no lawyer needed, takes fifteen minutes.

One more thing

“LinkedIn does not intentionally use personal data from non-members to train its generative AI models. However, non-member personal data could inadvertently be used in model training — for example, if a LinkedIn member mentions you in a post or comment.”

People who have never created a LinkedIn account may be in the training data. If someone mentioned your name, your role, your opinions in a post — already processed. You never agreed to LinkedIn’s terms because you never signed up. Doesn’t matter.

This post is about much more than an obscure setting hidden in a menu. It’s about sovereignty. About how these companies capture the output of talented, skilled professionals and exploit it without being transparent. They are not using “stupid” data — they are using intelligent data, coming from experts and professionals who built their reputations in good faith. Politics affects your privacy in much deeper ways than you might think. Laws exist to be enforced. If they are not enforced, they are just shiny legal papers. GDPR was the first of its kind. It paved the way for how we understand and process personal data. But it has limits, and those limits are being pushed by powerful entities against a powerless individual.

Data is not just zeroes and ones in a database. It’s political power. It’s negotiating power. And perhaps a powerful weapon, not as loud as bombs but equally destructive.